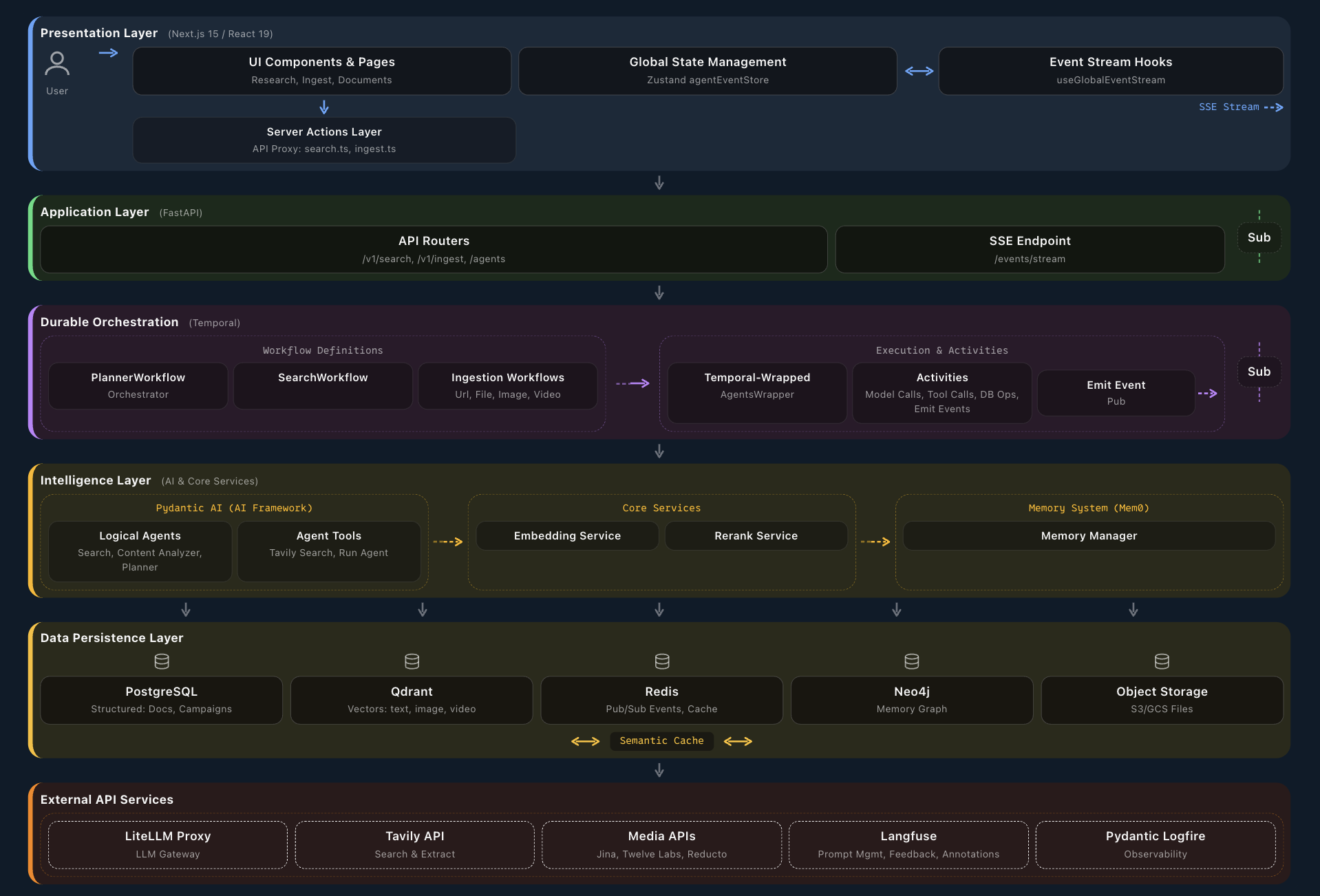

The 13 Layers in a Production Deployment

This is what the reference architecture looks like when it ships — not a slide deck, but a real system with defined layers, technology choices, and integration points.

Reference architecture built on Ultrathink Axon™ — read the full whitepaper for buy/build guidance across all 13 layers.